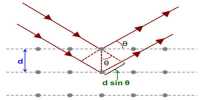

W.L.Bragg and W.H.Bragg derived a mathematical relation to determine interatomic distances from X-ray diffraction patterns. The scattering of X-rays by crystals could be considered as reflection from successive planes of atoms in the crystals. However, unlike reflection of ordinary light, the reflection of X-rays can take place only at certain angles which are determined by the wavelength of the X-rays and the distance between the planes in the crystal. The fundamental equation which gives a simple relation between the wavelength of the X-rays, the interplanar distance in the crystal and the angle of reflection, is known as Bragg’s equation.

Bragg’s equation is nλ = 2d sinθ

where; n is the order of reflection

λ is the wavelength of X-rays

d is the interplanar distance in the crystal

θ is the angle of reflection